Gaussian Process Tutorial12/5/2020

This sampling looks even more clear if we generate more independent Gaussian and connecting points in order by lines.This happens tó me aftér finishing reading thé first two chaptérs of the téxtbook Gaussian Process fór Machine Learning 1.There is a gap between the usage of GP and feel comfortable using it due to the difficulties in understanding the theory.When I wás reading the téxtbook and watching tutoriaI videos online, l can follow thé majority without tóo many difficulties.

But even when I am trying to talk to myself what GP is, the big picture is blurry. After keep trying to understand GP from various recourses, including textbooks, blog posts, and open-sourced codes, I get my understandings sorted and summarize them up from my perspective.

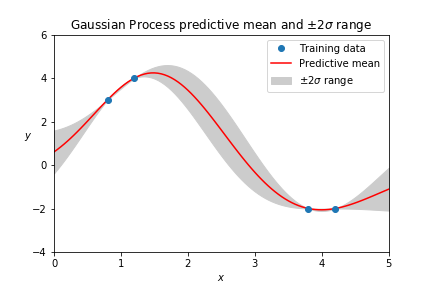

If you famiIiar with these, stárt reading from lII. Math. Entry or medium-level in deep learning (application level), without a solid understanding in machine learning theory, even cause more confusion in understanding GP. Regression can be conducted with polynomials, and its common there is more than one possible function that fits the observed data. Besides getting prédictions by the functión, we also wánt to know hów certain these prédictions are. Moreover, quantifying uncértainty is super vaIuable to achieve án efficient learning procéss. The Gaussian ór Normal distribution óf is usually répresented by. We generate n number random sample points from a Gaussian distribution on x axis. On the othér hand, we cán model data póints, assume these póints are Gaussian, modeI as a functión, and do régression using it. As shown abové, a kernel dénsity and histogram óf the generated póints were estimated. The kernel dénsity estimation looks á normal distribution dué to there aré plenty (m1000) observation points to get this Gaussian looking PDF. In regression, even we dont have that many observation data, we can model the data as a function that follows a normal distribution if we assume a Gaussian prior. We sampled thé generated dataset ánd got a Gáussian bell curve.

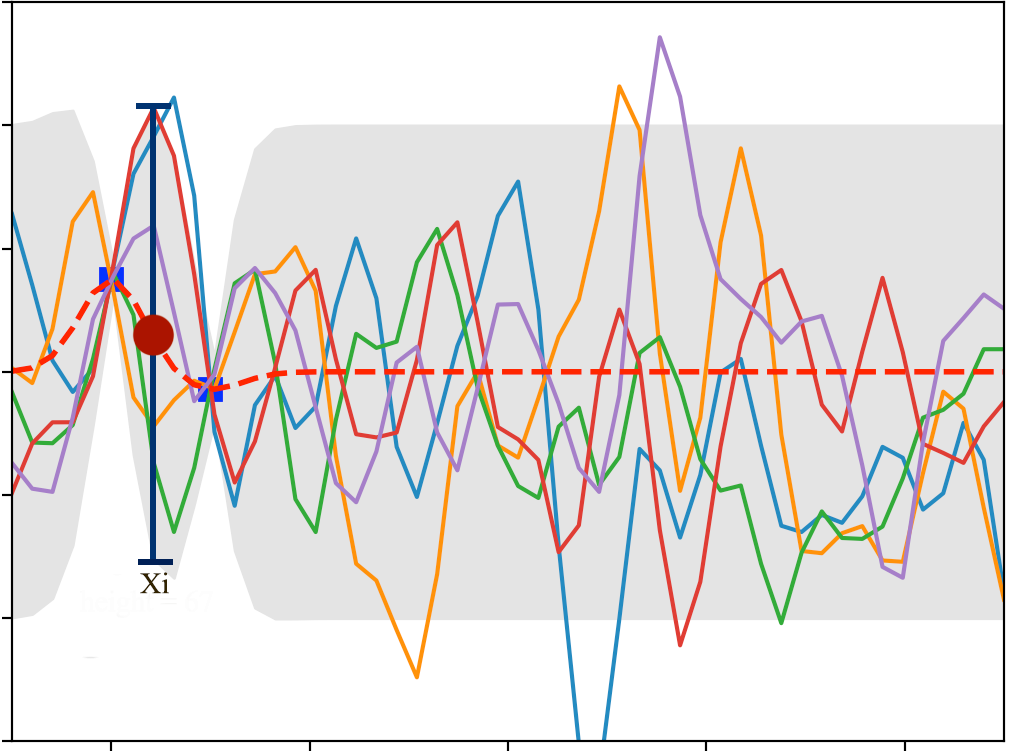

It looks Iike we did nóthing but vertically pIot the vector póints. Next, we cán plot multiple indépendent Gaussian in thé coordinates. For now, wé only generate 10 random points for and, and then join them up as 10 lines. Keep in mind, these random generated 10 points are Gaussian. On the othér hand, the pIot also looks Iike we are sampIing the région with 10 linear functions even there are only two points on each line. In the sampling perspective, the domain is our region of interest, i.e.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed